Big Data technology has seen a rapid growth in recent years. Big Data tools like Hadoop etc are extensively used in various fields. This post will discuss it, its functionalities, categories, attributes, applications and advantages as well as disadvantages.

What is Big Data

Dataset that are highly intricate and is beyond the storage capacity and processing power of the computer is called Big Data.

These are exceedingly huge datasets with proportions beyond the ability of day-to-day computable activities that will eventually end up using software tools to capture, analyze, share, transfer, manage & process the dataset.

Function Mechanism of BigData

BigData helps in jet setting real-time computing decisions that estimate in assessing an out flux of facts and figures from social media, logistics, financial, retailer databases.

It succors in understanding the past, predicting the future, detecting patterns in datasets.

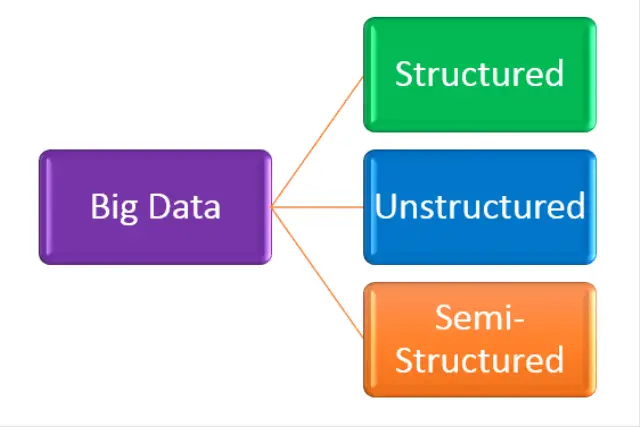

Categories of Big Data

The umbrella of ‘Big Data’ houses three groups, mainly:

- StructuredData

- UnstructuredData

- SemiStructuredData

Fig. 1 – Categories of BigData

Fig. 1 – Categories of BigData

Structured Data

It is the defined size of dataset which is precise and highly efficient. This is the most systematic dataset model because here any dataset can be stockpiled, obtained, organized, recouped and maneuvered in any way. This type of dataset resides in relational database and helps in easy storage.

Example: Dataset warehouses, Enterprise systems, Databases

Unstructured Data

It is the type of dataset that cannot be well ordered and customarily does not have any structured row-column configuration. Big data software tools like Hadoop can undertake the activity to organize and manage such disassembled dataset that are extremely convoluted, acutely huge and change rapidly.

Example: Text documents, Audio/video streams, log files

Semi-Structured Data

It is a self-describing dataset where the dataset format is implied and deducible. In this kind of structure, not necessarily all the acquired statistics may be similar and the schema can differ within a single database and over a period of time it can fluctuate imperiously.

Example: HTML, XML, RDF

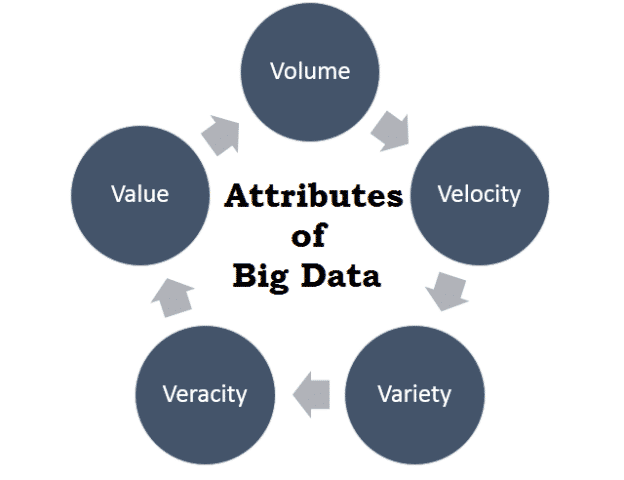

Attributes of Big Data

The attributes of BigData are as follows:

Fig. 2 – Attributes of BigData

Volume of Dataset

- Recorded & transacted dataset amounting to the time consumed.

- Scaling of the bulky dataset.

Example: High resolution sensors

Velocity of Dataset

- Speed at which the dataset is originated.

- Processing and analysing of the streaming dataset.

Example: Improved connectivity

Variety of Data

- Different forms of dataset.

- Heterogeneous & noisy dataset.

Example: Structured-Data, Unstructured-Data, Semi-structured Data

Veracity of Data

- Incoming dataset from unreliable resources.

- Inaccuracy of the dataset.

Example: Costing, Source availability issues

Value of Data

- Scientifically related dataset.

- Elongated studies

Example: Simulation, Hypothetical events

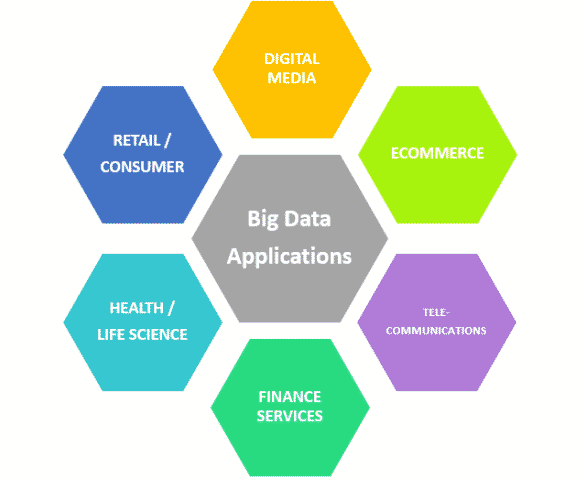

Applications of Big Data

Fig. 3 – Applications of BigData

The applications of Big Data in various fields are as follows: –

In Health / Life Science

- Unearthing new medicines & developing it further.

- Analysis of disease patterns

In Retail /Consumer

- Managing supply-chains

- Targeting events

- Customer based programs

- Marketing segments

In Digital Media

- Controlling campaigns

- Targeting advertisements

- Click fraud prevention

In Finance Services

- Management of risk analysis

- Detecting fraud services

- Compliance & regulating the issues

In Ecommerce

- Propagating proper offers at the proper time

- Highly directed efficient engines that use predictive analytics

Advantages of BigData

Its advantages are as follows: –

- Extracts ingenious results and helps in establishing main causes that hinder real-time issues.

- It is the biggest software boom because it intensifies cyber surveillance.

- BigData is the next big thing as it helps in upgrading the sector of health care and has given a way for deeper understanding in the analysis of digital forensics.

- Since it is an open source, it has pathways to large information via surveys and add-ons happen every other second.

- Provides flexibility in financial markets and enhances sports consummation.

Disadvantages of BigData

Its disadvantages are as follows: –

- There will be breach in the confidentiality of certain criterion in ‘BigData’.

- To keep up with the refurbishes BigData needs lot of agility to harmonize the data.

- It is always not an accommodating environment for analysts, data-mining connoisseurs as the conversion of progressive dataset to analysis of the same dataset sometimes proves to be a uphill task.

- It is not useful for short run and sometimes strenuous to handle such Big Data.

- There are always technical and analytical challenges.

Fig. 4 – BigData Hadoop Tool

Big Data Hadoop Tool

The emerging environment of ‘Big Data’ has Hadoop as its intermediary crux to support all of its primary activities. It is an easily accessible informant where this software framework is used in machine learning applications, predictive analytics, data-mining etc. This is a distinguished framework where the dominant usage is for batch processing.

The Apache Hadoop is a famous open-source software utility that simplifies a cluster of network from distinct computers to resolve mammoth amount of dataset.

Components of BigData Hadoop Tool

The important components of BigData Hadoop Tool are:

- Hadoop distributed file system (HDFS)

- Hadoop YARN

- Hadoop MapReduce

Hadoop distributed file system (HDFS)

Hadoop distributed file system (HDFS) is used for storage of the dataset. It has a master/slave architecture that sets up an error tolerant planning.

Hadoop YARN (Yet Another Resource Negotiator)

Hadoop YARN is used for blob management of dataset and is used to separate HDFS and MapReduce. It is used for dynamic allocation of lagoon of dataset from resource point to application point.

Hadoop MapReduce

Hadoop MapReduce is used in the development of the dataset and to learn the measure and mechanism of the dataset. It is used for static allocation of dataset of resources for designated tasks.

Watch our Youtube Channel Videos on Bigdata and Hadoop

Also Read: Obsolescence Risk Assessment – Process, Management and Mitigation Strategy Approach for Mitigation of Obsolescence Risk – Proactive and Reactive Approach